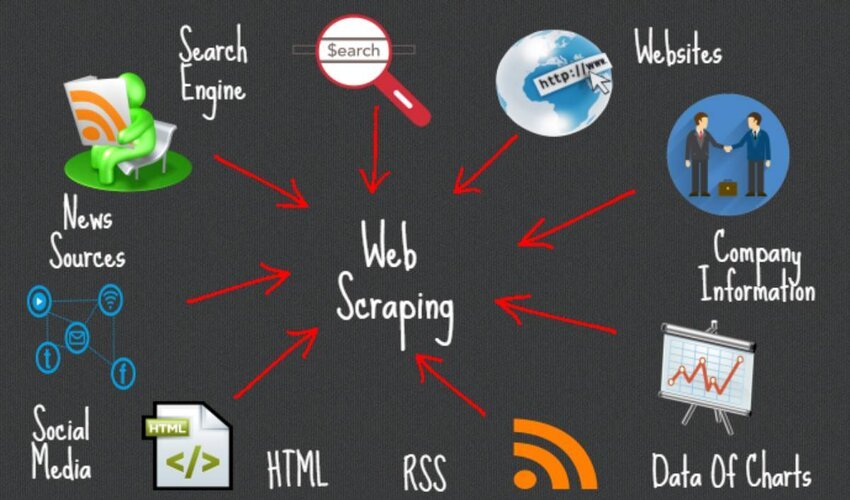

Web scraping has become vital for extracting valuable data from websites. It helps entrepreneurs and strategists meet very specific demand of datasets from relevant or niche-based websites. That’s why the global demand for such software was valued at US$ 330 million in 2022. This value is likely to soar upto $363 million by 2023 and $1469 million by 2033.

This objective can be achieved through two ways, which are using tools or through APIs. Tools are numerous, but unfortunately not all of them are free. Here, you can see which web scraping tools are free and indeed effective in terms of features and capabilities.

- BeautifulSoup

The very first in the lane is BeautifulSoup, which comes up with a Python library. This library makes it easy to parse HTML and XML documents. Besides, there are multiple more features in it.

- It provides easy navigation or switching from one option or page to another. Simply put, it allows searches to take place through the parsed tree structure.

- Additionally, it supports various parsers, including lxml, html5lib, and html.parser to input source code structure abstractly.

- One can easily extract data using CSS selectors or XPath expressions with it.

- It handles poorly formatted HTML gracefully and deals with errors like an expert data scraper.

- This is the best fit tool for those who have small to medium-sized scraping needs.

- Scrapy

This is an open-source scraping tool, which is available with a collaborative web crawling framework in Python. This web extraction software also comes up with multiple facilities, which are given below:

- It handles large-volume of web scraping projects with asynchronous requests.

- This one also enables data extraction using XPath or CSS selectors.

- It is amazing to have a built-in request scheduler for auto-requesting scraping, and robust error handling

- To dismiss any technical hassles, it supports middleware. With its assistance, handling proxies, user agents, and cookies becomes easier.

- There are some inclusive features like automatic retries, caching, and built-in spider management to facilitate users.

- Selenium

This is an automation tool whose primary use is to test a website. Simultaneously, it is extremely effective for extracting data from websites. This is one of the most preferred software for getting web data because of these features:

- It assists various programming languages, including Python, Java, and C# to raise and follow a request for extraction.

- This comes up with a browser automation framework to simulate user interactions.

- You can expect easy handling of dynamic websites and JavaScript-based rendering.

- It involves steps to draw data, which happens by interacting with elements, filling forms, and clicking buttons.

- This can be the most suitable for scraping websites that rely heavily on AJAX requests.

- Octoparse

A user-friendly visual scraping tool that comes with a point-and-click interface is Octoparse. This software is a household name for those who deal in data or big data because of these features:

- This can smoothly run on both, Windows and Mac operating systems.

- If you target dynamic websites, AJAX-based pages, and APIs for extracting data, this is the perfect choice.

- Unlike creating templates, it comes with pre-built templates for popular websites. Additionally, you can also create desirable templates for customized scraping.

- It helps you win half the battle for being equipped with advanced features like XPath editing, data cleaning, and scheduling.

- For exporting, it offers extracted data in various formats, including Excel, CSV, or databases to fulfill diverse requirements.

- ParseHub

This is a visual web scraping tool that simplifies the process of extraction from websites. It is a desktop application for Windows, Mac, and Linux. Like other software, it also shows up some unique features. These can be the following:

- This allows point-and-click selection of elements for easily extracting a few pieces of information.

- Some advanced features like nested data extraction, pagination, and conditional scraping prove it authentic and incredible.

- Users can export data using it, which can happen in multiple formats or integration with other tools via APIs.

- You can check a built-in proxy and JavaScript rendering capabilities within it.

- It offers a free tier with limitations on a series of projects and data storage.

- Import.io

This is again a web-based platform for drawing useful details from websites via extraction and then, transform them for converting them into ready-to-use datasets. This happens because of some advanced features that support quick and efficient scraping of any piece of information.

- The selection process (of elements) with it is carried out via point-and-click interface.

- It offers automated extraction using machine learning algorithms or patterns.

- You can set up a time and also get real-time data because of those ML algorithms.

- For exporting also, it offers various formats and integration facility with other systems.

- This feature is unique because it allows scraping in collaboration and by using sharing features for teams.

Conclusion

Web scraping is an innovative technique for extracting data & now – days-, it’s happening by using machine learning algorithms. Having the right tools can significantly enhance the process. There are many effective free web scraping tools. From BeautifulSoup’s simplicity to Scrapy’s scalability, and Selenium’s dynamic website handling, all of these tools offer a range of capabilities for data extraction. Octoparse, ParseHub, and Import.io provide user-friendly interfaces and advanced features to cater to different scraping needs. By leveraging these free web scraping tools, you can unlock the power of data extraction and gain valuable insights from the vast web resources available.

BIO

Rahul is a data scientist and analyst who is achieving new milestones every day while being associated with Eminenture. His curiosity pushes him to learn and share what he finds amazing and really useful, especially when it comes to deriving machine learning algorithms. The blogs or articles that he shares have a big fan following, which inspires him to provide information.